The `worker_rlimit_nofile` directive is one of the most misunderstood settings in NGINX configuration. This comprehensive guide explains how to properly tune **worker_rlimit_nofile** for optimal performance, including the correct formula, system-level configuration, and common pitfalls to avoid.

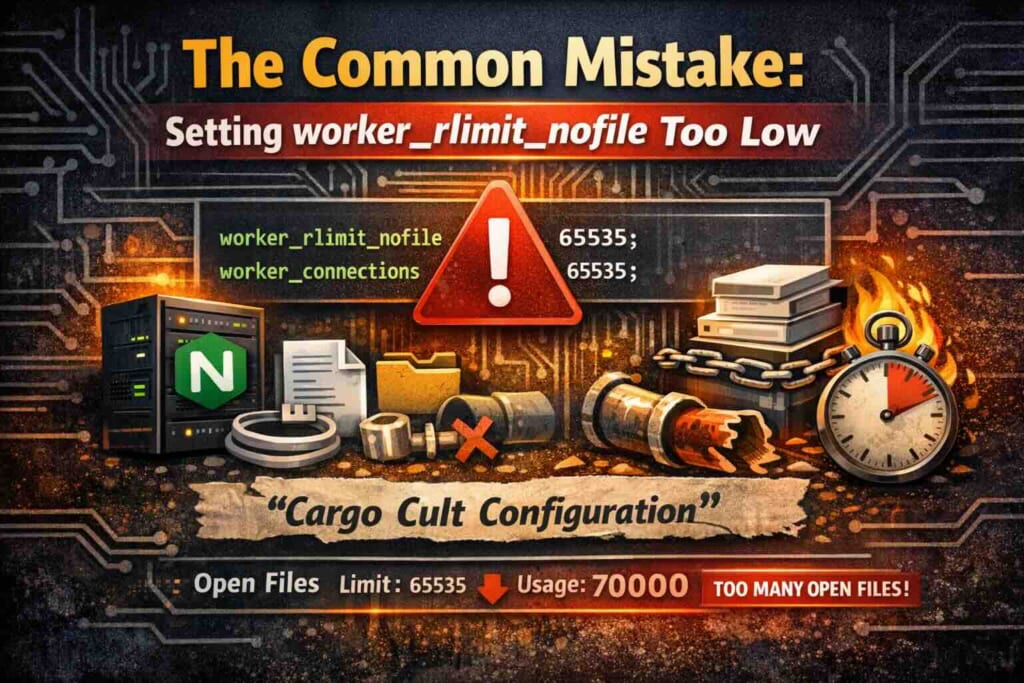

## The Common Mistake: Setting worker_rlimit_nofile Too Low

Before diving into the right approach, let’s address what you’ll find on most blogs and Stack Overflow answers:

“`nginx

worker_rlimit_nofile 65535;

worker_connections 65535;

“`

This advice, while not necessarily wrong, is often applied blindly without understanding its implications. The problem? It’s cargo cult configuration – copying values that “seem to work” without grasping the underlying mechanics.

## What Is worker_rlimit_nofile and Why Does It Matter?

The `worker_rlimit_nofile` directive sets the maximum number of **open files** (file descriptors) that each NGINX worker process can use. In Unix-like operating systems, this includes:

– **Sockets** (one for each client connection)

– **Open files** (static content, cached responses)

– **Pipes** (inter-process communication)

– **Timers and signals** (internal NGINX operations)

Here’s what the NGINX source code (`ngx_process_cycle.c`) reveals about how this works:

“`c

if (ccf->rlimit_nofile != NGX_CONF_UNSET) {

rlmt.rlim_cur = (rlim_t) ccf->rlimit_nofile;

rlmt.rlim_max = (rlim_t) ccf->rlimit_nofile;

if (setrlimit(RLIMIT_NOFILE, &rlmt) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

“setrlimit(RLIMIT_NOFILE, %i) failed”,

ccf->rlimit_nofile);

}

}

“`

This code tells us something crucial: NGINX calls `setrlimit(RLIMIT_NOFILE, …)` for **each worker process** after it forks. This means:

1. The directive **only affects worker processes**, not the master process

2. It sets **both soft and hard limits** to the same value

3. If the system doesn’t allow the requested limit, NGINX logs an error but continues

## The Relationship Between worker_rlimit_nofile and worker_connections

Understanding how these two directives interact is critical for proper tuning. Looking at NGINX source code (`ngx_event.c`):

“`c

if (ecf->connections > (ngx_uint_t) rlmt.rlim_cur

&& (ccf->rlimit_nofile == NGX_CONF_UNSET

|| ecf->connections > (ngx_uint_t) ccf->rlimit_nofile))

{

limit = (ccf->rlimit_nofile == NGX_CONF_UNSET) ?

(ngx_int_t) rlmt.rlim_cur : ccf->rlimit_nofile;

ngx_log_error(NGX_LOG_WARN, cycle->log, 0,

“%ui worker_connections exceed ”

“open file resource limit: %i”,

ecf->connections, limit);

}

“`

This reveals the **validation logic**:

– NGINX checks if `worker_connections` exceeds the file descriptor limit

– It compares against either `worker_rlimit_nofile` (if set) or the system’s current limit

– If exceeded, NGINX emits a **warning** but doesn’t fail

### The Formula You Actually Need

Each connection requires **at least one file descriptor** for the client socket. When proxying, you need **two** – one for the client, one for the upstream. For static files, you need additional descriptors.

The safe formula is:

“`

worker_rlimit_nofile >= worker_connections × 2 + some_margin

“`

For a typical reverse proxy setup:

“`nginx

worker_processes auto;

worker_rlimit_nofile 65535;

events {

worker_connections 16384; # Can handle ~8000 concurrent proxied connections per worker

}

“`

## Finding Your Current System Limits

Before tuning NGINX, you must understand your system’s constraints:

### Check Current Limits

“`bash

# View current soft and hard limits for open files

ulimit -Sn # Soft limit

ulimit -Hn # Hard limit

# System-wide maximum

cat /proc/sys/fs/file-max

# Currently open file descriptors system-wide

cat /proc/sys/fs/file-nr

“`

### Check Per-Process Limits

“`bash

# Find NGINX worker PIDs

pgrep -f “nginx: worker”

# Check file descriptor usage for a specific worker

ls -la /proc//fd | wc -l

# Or use lsof for detailed view

lsof -p | wc -l

“`

### NGINX’s Startup Detection

When NGINX starts, it calls `getrlimit(RLIMIT_NOFILE)` to detect system limits. From `ngx_posix_init.c`:

“`c

if (getrlimit(RLIMIT_NOFILE, &rlmt) == -1) {

ngx_log_error(NGX_LOG_ALERT, log, errno,

“getrlimit(RLIMIT_NOFILE) failed”);

return NGX_ERROR;

}

ngx_max_sockets = (ngx_int_t) rlmt.rlim_cur;

“`

The `ngx_max_sockets` value becomes NGINX’s baseline for file descriptor allocation.

## How to Properly Calculate worker_rlimit_nofile

### Step 1: Understand Your Workload

Different NGINX deployments have vastly different file descriptor requirements:

| Use Case | FDs per Connection | Notes |

|———-|——————-|——-|

| Static files | 1 + files | Client socket + open files |

| Reverse proxy | 2 | Client + upstream sockets |

| Websockets | 2 | Long-lived connections |

| SSL termination | 2 | Client + upstream, plus SSL session cache FDs |

| FastCGI/uWSGI | 2 | Client + backend socket |

### Step 2: Calculate Based on Expected Connections

For a server expecting 10,000 concurrent connections with reverse proxy:

“`

Required FDs = 10,000 connections × 2 (client + upstream) = 20,000

Safety margin = 20% = 4,000

worker_rlimit_nofile = 24,000 (minimum)

“`

If you have 4 worker processes:

“`

Per-worker connections = 10,000 ÷ 4 = 2,500

Per-worker FDs = 2,500 × 2 × 1.2 = 6,000

“`

### Step 3: Consider File Caching

If you use `open_file_cache`:

“`nginx

open_file_cache max=10000 inactive=60s;

open_file_cache_valid 30s;

“`

This alone can consume up to 10,000 additional file descriptors per worker.

## Configuring worker_rlimit_nofile: Complete Examples

### Basic Reverse Proxy

“`nginx

worker_processes auto;

worker_rlimit_nofile 30000;

events {

worker_connections 10000;

use epoll;

multi_accept on;

}

http {

# … your configuration

}

“`

### High-Traffic Static File Server

“`nginx

worker_processes auto;

worker_rlimit_nofile 65535;

events {

worker_connections 16384;

use epoll;

multi_accept on;

}

http {

open_file_cache max=20000 inactive=60s;

open_file_cache_valid 30s;

open_file_cache_min_uses 2;

open_file_cache_errors on;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

# … your configuration

}

“`

### Extreme Scale (100K+ Connections)

“`nginx

worker_processes auto;

worker_rlimit_nofile 524288; # 512K

events {

worker_connections 131072; # 128K per worker

use epoll;

multi_accept on;

}

http {

# Enable connection reuse

keepalive_timeout 65;

keepalive_requests 10000;

# Upstream keepalive for reduced FD churn

upstream backend {

server 10.0.0.1:8080;

keepalive 512;

}

# … your configuration

}

“`

## System-Level Configuration for High File Descriptor Limits

Setting `worker_rlimit_nofile` in NGINX isn’t enough – the operating system must also support these limits.

### Increase System Limits

Edit `/etc/sysctl.conf`:

“`bash

# Maximum number of file descriptors system-wide

fs.file-max = 2097152

# Maximum number of file handles the kernel can allocate

fs.nr_open = 2097152

“`

Apply changes:

“`bash

sysctl -p

“`

### Increase User Limits

Edit `/etc/security/limits.conf`:

“`bash

# For the nginx user (or www-data, depending on your setup)

nginx soft nofile 524288

nginx hard nofile 524288

# Or for all users

* soft nofile 524288

* hard nofile 524288

“`

### Systemd Service Configuration

If NGINX is managed by systemd, create `/etc/systemd/system/nginx.service.d/limits.conf`:

“`ini

[Service]

LimitNOFILE=524288

“`

Reload systemd:

“`bash

systemctl daemon-reload

systemctl restart nginx

“`

### Verify Limits Are Applied

“`bash

# Check NGINX worker process limits

cat /proc/$(pgrep -f “nginx: worker” | head -1)/limits | grep “open files”

“`

Expected output:

“`

Max open files 524288 524288 files

“`

## Common Errors and How to Fix Them

### Error: “Too many open files”

**Cause**: `worker_rlimit_nofile` is too low, or system limits prevent NGINX from setting the requested value.

**Solution**:

1. Increase `worker_rlimit_nofile` in `nginx.conf`

2. Increase system limits (see above)

3. Check if systemd is overriding limits

### Error: “setrlimit(RLIMIT_NOFILE, X) failed”

**Cause**: NGINX cannot set the requested file descriptor limit because:

– The system hard limit is lower than the requested value

– NGINX is running as a non-root user that cannot exceed current limits

**Solution**:

“`bash

# Check current hard limit

ulimit -Hn

# If too low, increase it in /etc/security/limits.conf

# Then restart the session and NGINX

“`

### Warning: “worker_connections exceed open file resource limit”

**Cause**: `worker_connections` is higher than the available file descriptors.

**Solution**: Either increase `worker_rlimit_nofile` or decrease `worker_connections`:

“`nginx

# Ensure this formula holds:

# worker_rlimit_nofile >= worker_connections × 2

worker_rlimit_nofile 65535;

events {

worker_connections 30000; # 30000 × 2 = 60000 /dev/null | wc -l)

max_fds=$(cat /proc/$pid/limits 2>/dev/null | grep “Max open files” | awk ‘{print $4}’)

percent=$((current_fds * 100 / max_fds))

echo “Worker $pid: $current_fds / $max_fds FDs ($percent%)”

done

echo “—”

sleep 5

done

“`

### Using Prometheus and nginx-vts-exporter

For production monitoring, use metrics exporters:

“`nginx

http {

vhost_traffic_status_zone;

server {

location /status {

vhost_traffic_status_display;

vhost_traffic_status_display_format prometheus;

}

}

}

“`

## Performance Implications of worker_rlimit_nofile

### Memory Overhead

Each open file descriptor consumes memory for:

– Kernel data structures (~1KB per FD)

– NGINX connection structures (~256 bytes per connection)

– SSL session data (if applicable, ~4KB per connection)

For 100,000 connections:

“`

Kernel overhead: ~100MB

NGINX structures: ~25MB

SSL (if used): ~400MB

Total: ~525MB per worker

“`

### CPU Considerations

Higher file descriptor limits mean:

– More connections to manage per event loop iteration

– Larger data structures to traverse for `epoll_wait()`

– More memory bandwidth requirements

With `epoll` (Linux), this scales efficiently to millions of connections. The overhead is logarithmic, not linear.

## Best Practices Summary

1. **Never set worker_rlimit_nofile blindly** – calculate based on your actual needs

2. **Use the 2× rule**: `worker_rlimit_nofile >= worker_connections × 2`

3. **Account for file caching**: Add `open_file_cache max` value to your calculation

4. **Set system limits first**: `/etc/security/limits.conf` and `sysctl.conf`

5. **Use systemd overrides**: Essential for systemd-managed NGINX

6. **Monitor in production**: Track actual FD usage before and after tuning

7. **Test under load**: Use tools like `wrk` or `ab` to verify your configuration handles expected traffic

## Conclusion

Tuning `worker_rlimit_nofile` in NGINX is essential for high-performance deployments. Unlike cargo cult configuration that suggests arbitrary values, understanding the relationship between file descriptors, `worker_connections`, and your actual workload allows you to configure NGINX optimally.

Key takeaways:

– The directive controls file descriptor limits **per worker process**

– NGINX uses `setrlimit()` to apply the limit after forking workers

– System limits must be configured to support your desired NGINX limits

– Monitor actual file descriptor usage to validate your configuration

By following the guidelines in this article, you’ll avoid the common pitfalls of both under-provisioning (causing “too many open files” errors) and over-provisioning (wasting system resources).