yum upgrades for production use, this is the repository for you.

Active subscription is required.

📅 Updated: November 17, 2023 (Originally published: September 9, 2021)

EPEL metalink errors

EPEL is a great third-party repository for RHEL and derivative operating systems. It stands a special position among third-party repositories.

It has a huge number of packages available, which is why it can be deemed as a mere extension of the operating system itself.

It has a non-commercial status, however, it is largely supported by RedHat employees who dedicate their free time.

However, its freeware status is the very thing that “causes” issues in its infrastructure. Have you ever been unable to update anything, because you had this dreaded metalink error:

Errors during downloading metadata for repository ‘epel-modular’:

– Status code: 503 for https://mirrors.fedoraproject.org/metalink?repo=epel-modular-8&arch=x86_64&infra=container&content=pub/rocky (IP: x.x.x.x)

Error: Failed to download metadata for repo ‘epel-modular’: Cannot prepare internal mirrorlist: Status code: 503 for https://mirrors.fedoraproject.org/metalink?repo=epel-modular-8&arch=x86_64&infra=container&content=pub/rocky (IP: x.x.x.x)

&tldr;

The new epel.cloud project is a simple front-end to EPEL sluggish repository. Thanks to efficient caching rules and Cloudflare CDN, it solves all the problems once and for all (yes, I said it).

Upgrade/install EPEL repository configuration for your OS which does not use metalink feature and instead relies on Cloudflare CDN.

Your yum/dnf will become extremely faster and more reliable thanks to this change.

Before anything, get rid of any customizations in your EPEL configuration, e.g.:

sudo rm -rf /etc/yum.repos.d/epel*

Then install OS-specific repository configuration

CentOS/RHEL 7

sudo yum -y install https://epel.cloud/pub/epel/epel-release-latest-7.noarch.rpm

CentOS/RHEL 8

sudo dnf -y install https://epel.cloud/pub/epel/epel-release-latest-8.noarch.rpm

CentOS/RHEL 9

sudo dnf -y install https://epel.cloud/pub/epel/epel-release-latest-9.noarch.rpm

That’s all there is to it. If you are curious how this solves anything, read more to find out.

Metalinks, you say?

EPEL relies on dozens and dozens of mirrors which are managed by MirrorManager software that is capable of creating a metalink file. This allows EPEL to create a sort of “CDN” service, where edges are provided by volunteer organizations.

However, the metalink file itself is hosted on the EPEL project’s servers and is hardly distributed across the globe.

Thus, whenever EPEL’s own infrastructure is in a degraded state, you will have a broken yum functionality (or like this, not to say that when things do work, there’s no good CDN.

You can easily end up using a slow server, should the third-party volunteers run out of capacity on their servers.

I wouldn’t be surprised if the downtimes with the metalinks are caused by RHEL trying to upsell its more reliable repository infrastructure, due to the unquestionable connection between RedHat employees and EPEL infra.

It’s worth noting that there are some rather reliable mirrors like `https://mirror.yandex.ru/epel/7/x86_64/repodata/`, but they do not provide a real CDN when you use them.

But why do we need a true CDN for EPEL? Because we can and because with many POPs available via professional CDN like Cloudflare, we get the fastest package and metadata downloads!

It’s even possible to create a DYI Cloudflare-powered EPEL CDN, and here I just list a few ingredients used to run epel.cloud.

Knowing our enemy

A perfect RPM repository, which we can deem EPEL as, will follow one strong pattern.

Its URLs are immutable! This means that each unique RPM package build will be hosted on a unique URL, that typically includes package name, version, and a release number.

Package repodatas are also hosted on immutable URLs which are generated when a repository is updated. The only notable exception is repomd.xml file, which has to be staying on the same URL, because

simply RPM clients must have a single known reference from where to fetch the actual data.

What does this all mean?! We can aggressively cache everything on Cloudflare, even repomd.xml.

Package URLs never change, so we do not need to clear their cache ever. The only changing part is repomd.xml, and we can still cache it aggressively, but we need to ensure its Cloudflare cache is cleared when repository had an update.

Cloudflare

We need a separate domain (e.g. we use epel.cloud) and point it to the origin, dl.fedoraproject.org via CNAME records for www and apex domain:

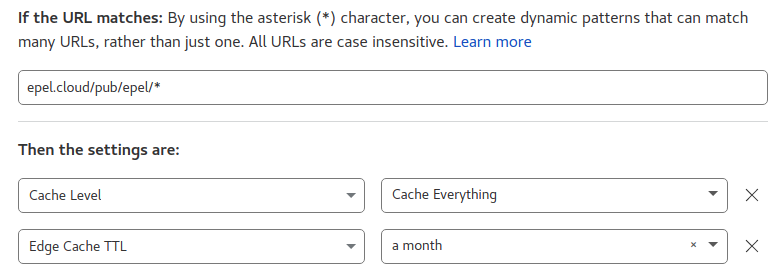

Cloudflare’s free account (and that’s all that is needed) does not cache RPMs unless we set a Cache Everything rule. And so, we set it. And thanks to the nature of the RPM filenames, these can be cached as immutable resources. We can actually set all files with the maximum Cache Edge TTL of 1 month, and ensure that repomd.xml is purged when our metadata sync detects a change in repositories.

The packages in repodata of EPEL are linked with URIs relative to the directory which is parent to repodata dirs.

This makes it possible to route our requests conditionally and achieve our goals of implementing “CDN for EPEL” without having to store any of RPM packages on our servers at all!

A request which arrives to epel.cloud (e.g. from a yum client) that is for repodata, will be fetched from Fedora EPEL servers only on the first missed cache request (for a specific geolocation).

Subsequent requests are served entirely from the Cloduflare fast infrastructure.

Requests for RPM packages are likewise hitting Cloudflare, however it proxies those requests to origin EPEL mirrors. And then this is happily cached by Cloudflare, thanks to the Cache Everything rule.

A Linux server

We need a Linux server that can do rsync from machines that already have EPEL repository files. We only need metadata.

The simple Linux machine will serve only one goal. Sync repodata, and invalidate our aggressive Cloudflare caching upon change.

You can see the simplicity that makes it possible to finally have reliable EPEL package downloads.

Syncing the metadata

We need to sync metadata for each and every actual EPEL sub-repository, including SRPMs. E.g.:

- https://dl.fedoraproject.org/pub/epel/7/SRPMS/repodata/

- https://dl.fedoraproject.org/pub/epel/7/aarch64/repodata/

- etc.

Mind that every different architecture has its own repository, and a debug subrepository.

To sync a repo we can run rsync against a capable mirror.

E.g. for the following location https://dl.fedoraproject.org/pub/epel/7/x86_64/repodata/ we can use rsync command:

rsync -az --delete rsync://dl.fedoraproject.org/fedora-epel/7/x86_64/repodata/ /srv/www/epel.cloud/public/7/x86_64/repodata/

While this works, it may give a capacity related error later on:

@ERROR: max connections (20) reached — try again later

Thus we need to use a more capable mirror

rsync -az --delete rsync://mirror.yandex.ru/fedora-epel/7/x86_64/repodata/ /srv/www/epel.cloud/public/7/x86_64/repodata/

Our file structure on the Linux server can be similar to the actual Fedora EPEL, except we only need metadata and only for EPEL repositories.

The closing step is a simple script that syncs all EPEL repositories’ metadata and purges cache for changed repomd.xml URLs.

A further improvement to the whole thing of sync and clear cache of repomd.xml files is just checking them from upstream using Last-Modified header directly.

This makes the syncing much faster and uses zero storage, neither requires a Linux server.

The whole sync runs on GitHub via scheduled workflow.

Repository configuration

An extra step is having our repository configuration easily installable and providing upgrade from the actual EPEL repository configuration.

This was achieved by rebuilding the corresponding packages with Epoch: 1 and Provides: for prior epoch value (value of “none”).

Results

Packages are cached at Cloudflare and fast to download

A simple assessment is due right now. Let’s compare the following:

https://epel.cloud/pub/epel/7/x86_64/Packages/0/0ad-0.0.22-1.el7.x86_64.rpm

https://dl.fedoraproject.org/pub/epel/7/x86_64/Packages/0/0ad-0.0.22-1.el7.x86_64.rpm

On an empty Cloudflare cache, the download speeds will be under 1 Mbps. But what happens when someone requested the package already?

[danila@life ~]$ axel https://epel.cloud/pub/epel/7/x86_64/Packages/0/0ad-0.0.22-1.el7.x86_64.rpm

Initializing download: https://epel.cloud/pub/epel/7/x86_64/Packages/0/0ad-0.0.22-1.el7.x86_64.rpm

File size: 3.68813 Megabyte(s) (3867288 bytes)

Opening output file 0ad-0.0.22-1.el7.x86_64.rpm.6

Starting download

Connection 0 finished

Downloaded 3.68813 Megabyte(s) in 1 second(s). (3382.52 KB/s)

The speed boost can be 3 times more!

Metadata is cached on Cloudflare and is fast to download

No metalink errors as we now have a true CDN

Mission accomplished.